Interobserver Reliability of Baseline Noncontrast CT Alberta Stroke Program Early CT Score for Intra-Arterial Stroke Treatment S

The disagreeable behaviour of the kappa statistic - Flight - 2015 - Pharmaceutical Statistics - Wiley Online Library

Comparison of the Physical Activity and Sedentary Behaviour Assessment Questionnaire and the Short-Form International Physical Activity Questionnaire: An Analysis of Health Survey for England Data | PLOS ONE

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

A comparison of the Charlson comorbidity index derived from medical records and claims data from patients undergoing lung cancer surgery in Korea: a population-based investigation – topic of research paper in Clinical

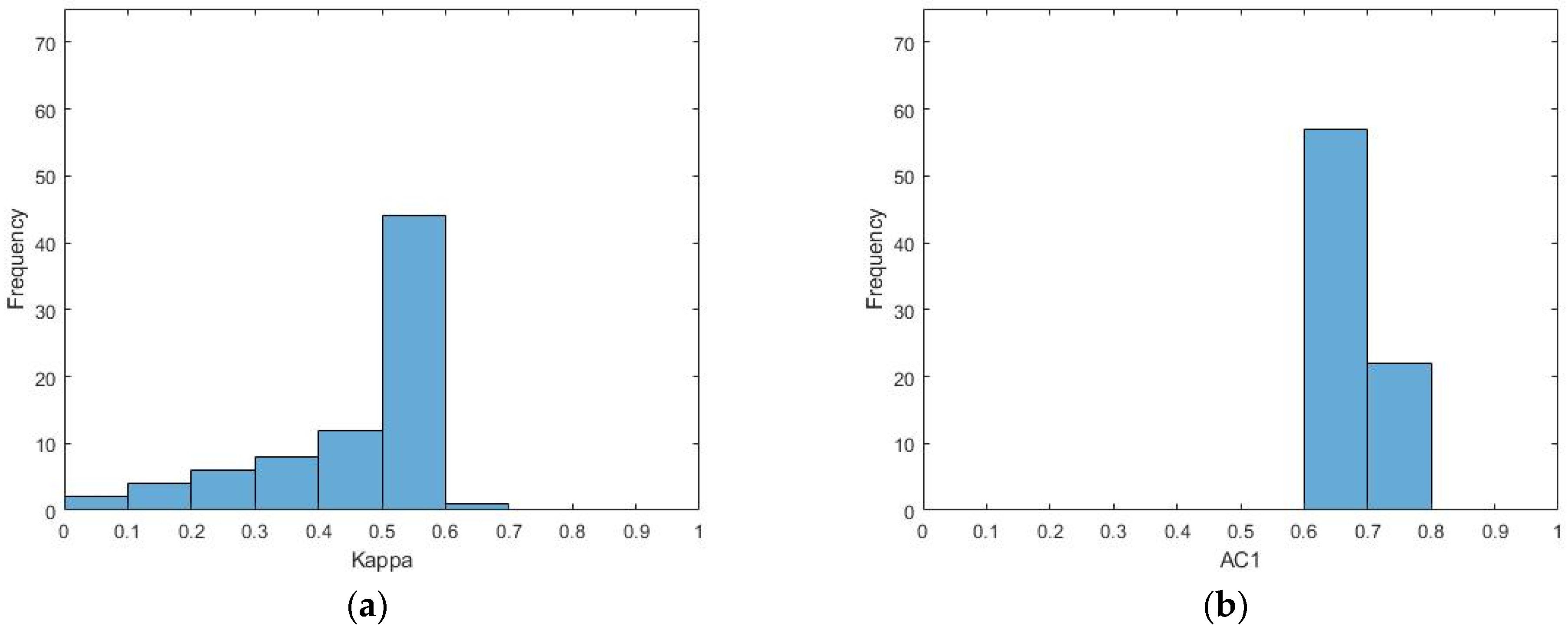

Level of agreement between patient-reported EQ-5D responses and EQ-5D responses mapped from the SF-12 in an injury population – topic of research paper in Health sciences. Download scholarly article PDF and read

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters

Combining ACL Oblique Coronal MRI with the Routine Knee MRI Protocol in the Diagnosis of ACL Bundle Lesions. Can It Add A Value?

What does PABAK mean? - Definition of PABAK - PABAK stands for Prevalence-Adjusted Bias-Adjusted Kappa. By AcronymsAndSlang.com

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

The disagreeable behaviour of the kappa statistic - Flight - 2015 - Pharmaceutical Statistics - Wiley Online Library

Accuracy of reported service use in a cohort of people who are chronically homeless and seriously mentally ill

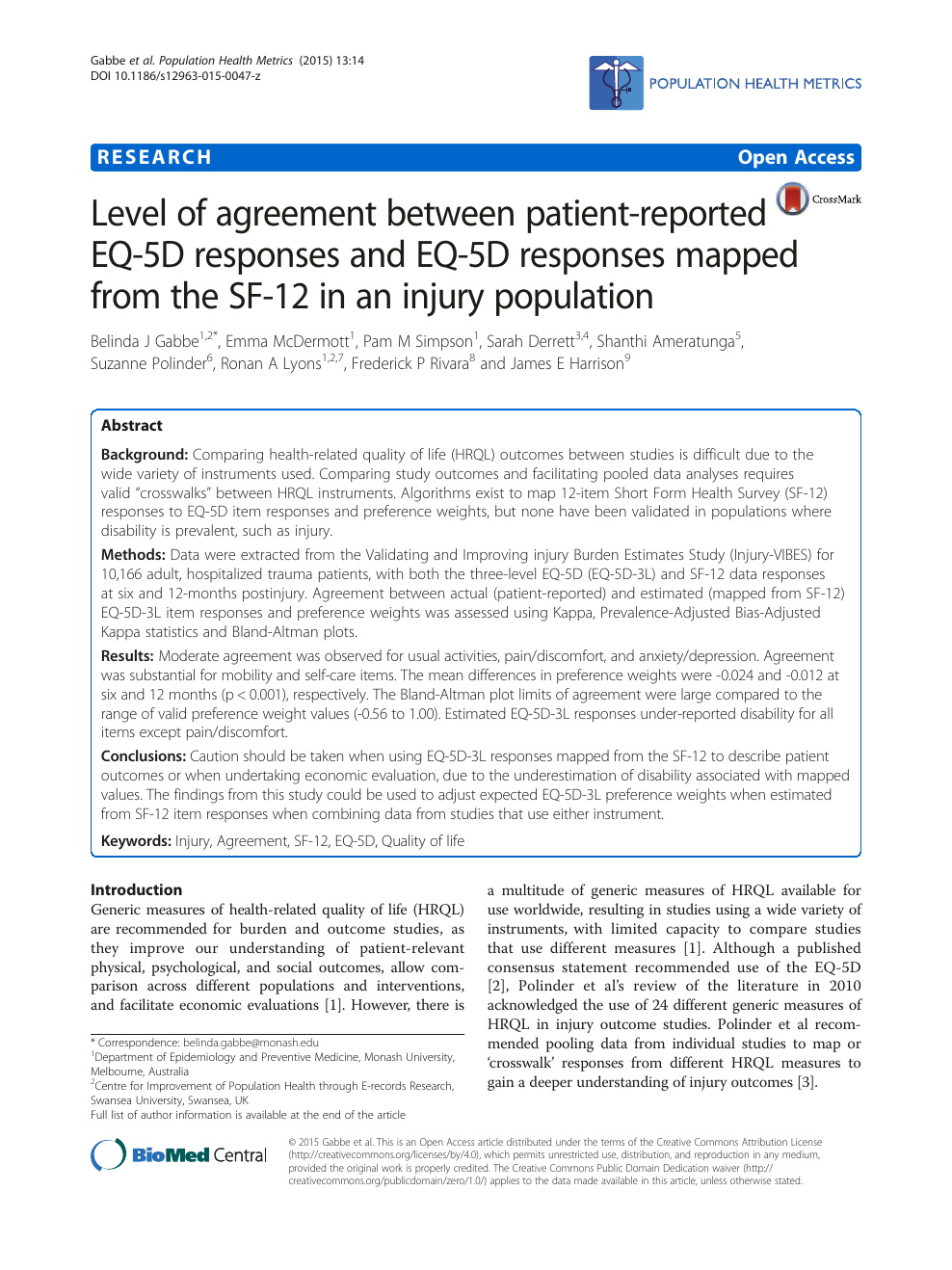

Kappa values and Prevalence-adjusted Bias-adjusted kappa values for... | Download Scientific Diagram

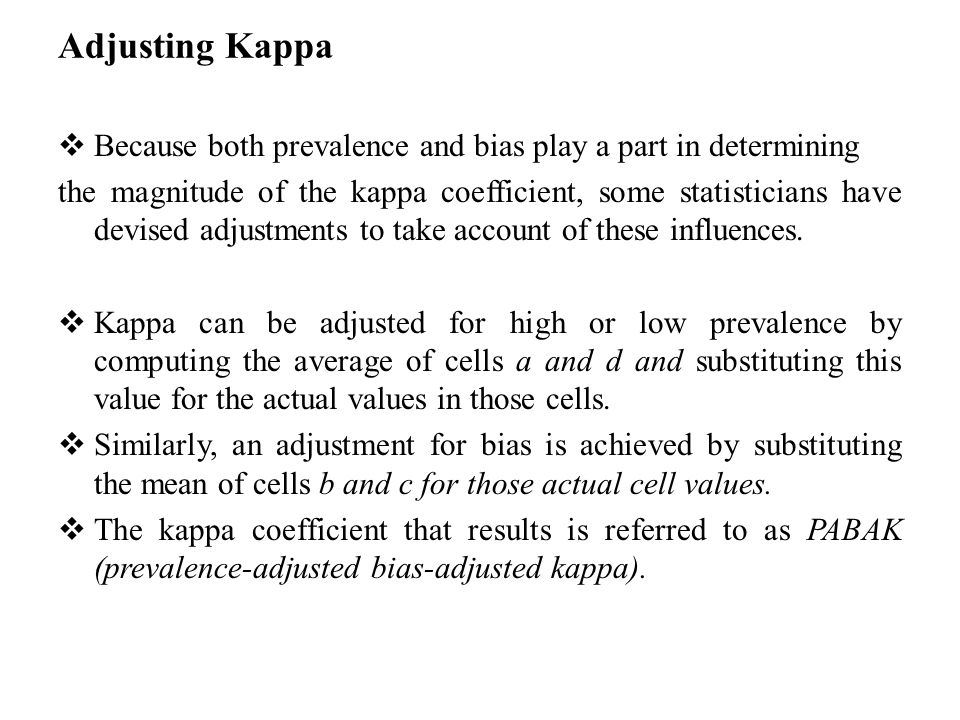

Inter-observer variation can be measured in any situation in which two or more independent observers are evaluating the same thing Kappa is intended to. - ppt download

Intra-Rater and Inter-Rater Reliability of a Medical Record Abstraction Study on Transition of Care after Childhood Cancer | PLOS ONE

Diagnostics | Free Full-Text | Inter- and Intra-Observer Agreement When Using a Diagnostic Labeling Scheme for Annotating Findings on Chest X-rays—An Early Step in the Development of a Deep Learning-Based Decision Support

![PDF] Kappa — A Critical Review | Semantic Scholar PDF] Kappa — A Critical Review | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/9b63c13f4f1eea48d64d67a26b2956d80c46b8fa/11-Table3.6-1.png)